Something’s cooking inside X’s codebase right now, and if you’re a creator, a brand operator, or someone who cares about what’s real online, you need to understand what it means before the rest of the internet catches up.

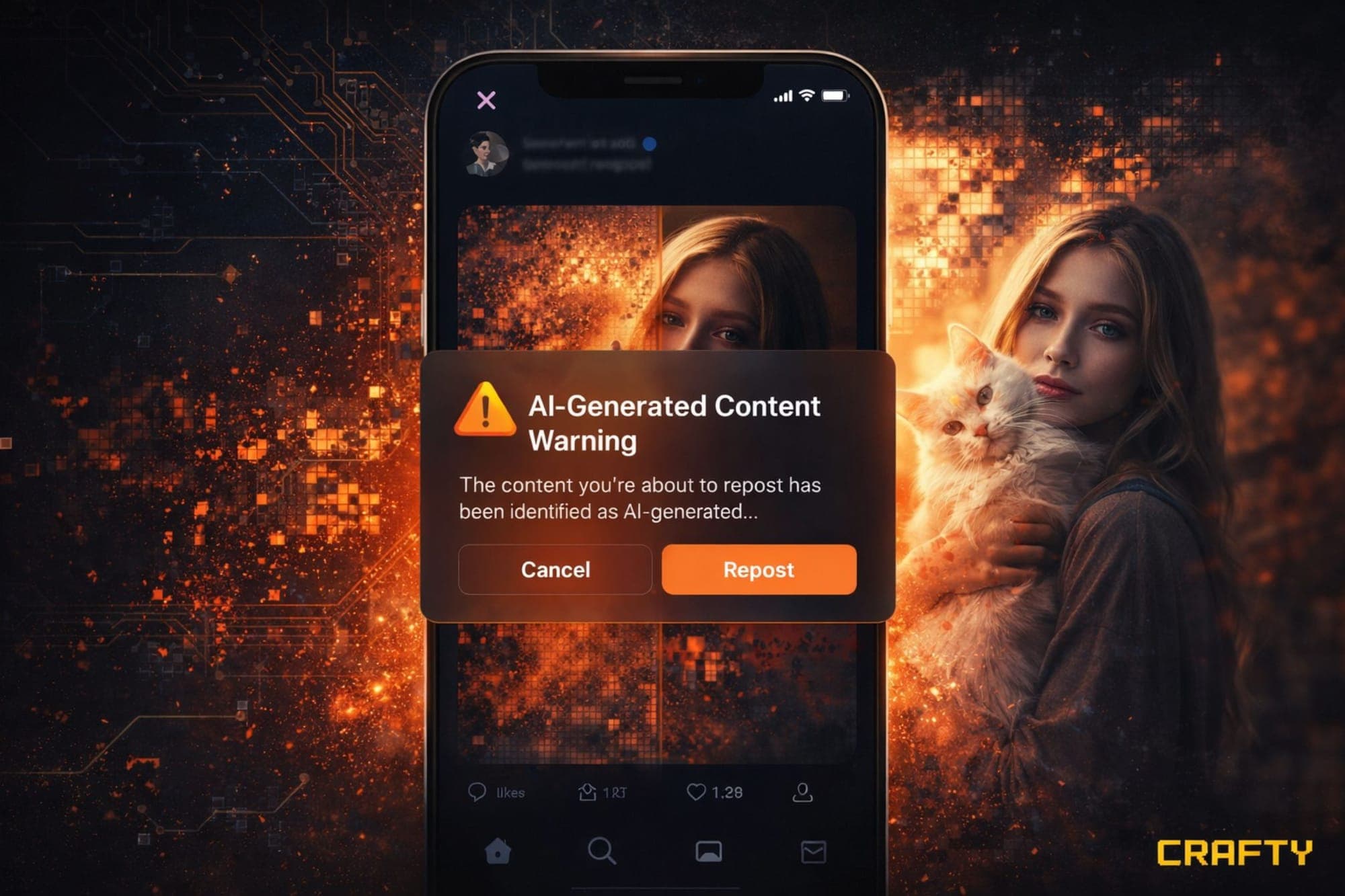

X is testing a pre-share AI content alert. Not a label slapped on after the fact. Not a community note that shows up three hours too late. A prompt that fires BEFORE you repost something its system flags as potentially AI-generated.

Let that sink in for a second.

A platform that processes hundreds of millions of posts per day is actively building infrastructure that introduces friction — a deliberate pause — into the sharing workflow. The message is essentially: “Hey. Do you know what you’re about to amplify?”

And if you understand how information spreads online, you know that’s a massive deal.

Where This Came From

This didn’t materialize out of thin air. Code snippets were first spotted by X Daily News in the platform’s back-end infrastructure. X hasn’t officially confirmed a launch date, but when you combine this with every other move they’ve made in the past few months, the trajectory is unmistakable.

Here’s the context that matters: In early 2026, when U.S. military operations involving Iran ramped up, X got hammered. Wired documented hundreds of posts — some racking up millions of views — that spread AI-generated visuals, altered footage, and outright fabricated battlefield content. Video game footage passed off as war zones. AI images indistinguishable from photojournalism to anyone scrolling casually.

X’s existing tools couldn’t contain it in real time. Community Notes helped, but notes have to be written, reviewed, and approved — and that latency is exactly the window misinformation exploits.

Nikita Bier, X’s head of product, acknowledged it publicly on March 3rd: “During times of war, it is critical that people have access to authentic information on the ground. With today’s AI technologies, it is trivial to create content that can mislead people.”

That same announcement included a new policy: any creator who posts AI-generated conflict footage without disclosure gets suspended from Creator Revenue Sharing for 90 days. Second offense? Permanent removal.

The pre-share alert is the next logical layer.

Why Friction Actually Works

If you think a simple pop-up can’t change behavior at scale, the data says otherwise.

Back in 2020, Twitter tested a prompt that appeared when users tried to retweet an article they hadn’t opened. Just a gentle nudge: “Want to read this first?”

The results were significant. People opened articles 40% more often after seeing the prompt. The rate of users opening an article before retweeting jumped 33%. Some users who saw the prompt simply didn’t retweet at all.

That’s not a marginal shift. That’s a behavioral intervention that worked at platform scale with nothing more than a single question.

The AI content alert applies the same principle to a harder problem. Instead of “did you read this?” it’s asking “do you know this might be synthetic?” The friction is identical. The stakes are higher.

The Full Stack X Is Building

The pre-share alert isn’t a standalone feature. It’s one piece of a layered system X is assembling — and understanding the full stack matters if you’re trying to build on this platform.

Grok Watermarks: Content generated through X’s own Grok AI already gets watermarked at the point of creation. Clean, controlled, effective — but only for content made with Grok. Anything from Midjourney, Stable Diffusion, Sora, or any other tool? No watermark unless the user adds it.

“Made with AI” Self-Disclosure Toggle: X is testing a toggle that lets creators voluntarily mark their posts as AI-generated before publishing. Good for honest creators. Useless against bad actors who simply won’t flip the switch.

Community Notes for Manipulated Media: The crowd-sourced fact-checking layer. Research from the University of Washington found it reduces engagement on altered media posts more effectively than on text posts. But it’s reactive and slow relative to the speed of viral content.

Revenue Sharing Penalties: The 90-day suspension policy creates a direct financial consequence. If undisclosed AI content costs you three months of ad revenue, the economic calculus changes — at least for creators who depend on that income.

Pre-Share Alert (In Testing): The newest layer. Acts before content reaches an audience. Uses automated detection rather than user self-reporting.

No single layer solves the problem. But stacked together, they create overlapping defenses that catch different failure modes. That’s smart systems design.

Why This Matters Beyond X

X isn’t operating in a vacuum. Every major platform is converging on the same basic framework: self-disclosure backed by automated detection, with penalties for non-compliance.

Meta requires “AI Info” labels on realistic synthetic images, videos, and audio — violations get downranked. YouTube requires disclosure of altered or synthetic content in sensitive areas — violations can mean demonetization. Google’s SynthID embeds invisible watermarks in Gemini-generated content. TikTok labels AI content and requires creators to disclose it.

But here’s the part most people are sleeping on: regulation is about to make all of this mandatory.

Article 50 of the EU AI Act mandates that AI-generated content be labeled in a machine-readable and detectable way. The European Commission published its first draft Code of Practice on Marking and Labeling AI-Generated Content in December 2025. Full applicability date? August 2, 2026.

That’s not a suggestion. That’s a legal deadline with enforcement teeth. Any platform operating in EU jurisdictions — which includes X — must have compliant systems in place or face consequences. The EU has already opened multiple investigations into X over content policies and Grok-generated imagery.

The features X is testing right now aren’t just product decisions. They’re compliance preparations. Getting ahead of August 2026 means shaping the approach on their own terms instead of scrambling under regulatory pressure.

The Detection Problem Nobody Wants to Talk About

Here’s where we have to be honest about the limitations.

Detecting whether a user opened a link before retweeting it is trivially easy — it’s a binary event the platform can track. Detecting whether an image, video, or text was generated by AI is a distinct class of problem.

Current AI detection tools — both text-based and visual — are imperfect. They produce false positives and false negatives. They struggle with content that blends human and AI contributions. They can be fooled by post-processing, compression, screenshot-and-reupload cycles, and other simple evasion techniques.

If X’s pre-share alert fires incorrectly — flagging human-created content as AI-generated — it risks undermining creator trust and cluttering the user experience. If it misses actual AI content, it creates false confidence that unflagged material is authentic.

The accuracy threshold matters enormously. Too many false positives will lead users to develop “alert fatigue” and start ignoring the prompts entirely. Too many false negatives, and the feature becomes performative.

This is the hardest technical challenge in the entire stack. Watermarking content at the point of creation (like Grok watermarks or Google’s SynthID) is elegant because it embeds provenance from the start. Detecting AI content after the fact, especially content created by third-party tools and potentially modified before upload, is a fundamentally harder problem.

X hasn’t disclosed what detection methods they’re using. The quality of the entire feature depends on the answer.

What This Means If You’re Building on X

If you’re a creator or a brand building an audience on X in 2026, here’s what matters practically:

Transparency is becoming non-negotiable. The direction across every platform and every regulatory body is the same: if you use AI in content creation, disclose it. The creators who get ahead of this — who build disclosure into their workflow now — will be positioned as trustworthy when the rules tighten. The ones who get caught not disclosing will pay the reputational cost.

Your content strategy needs an authenticity layer. When AI content alerts become widespread, audiences will increasingly distinguish between flagged and unflagged content. Content that reads as authentically human — or that transparently discloses AI assistance — will carry more weight than content that tries to pass off synthetic material as organic.

Revenue is at stake. If you’re in X’s Creator Revenue Sharing program, the 90-day suspension policy is real. One undisclosed AI-generated conflict video could cost you a quarter of your annual platform income. The risk-reward math on undisclosed AI content just changed dramatically.

Understand the layered system. Don’t think of AI content policy as a single rule. Think of it as a stack: watermarks, self-disclosure toggles, community notes, revenue penalties, pre-share alerts, and regulatory requirements. Each layer has gaps. Together, they create an environment where undisclosed AI content is increasingly difficult to distribute without consequence.

The EU deadline is everyone’s deadline. Even if you’re not based in the EU, if your audience includes EU users — and on a global platform like X, it almost certainly does — the August 2026 compliance date affects you. Platforms will implement labeling systems to satisfy the strictest regulatory requirements, and those systems will apply globally.

The Bigger Picture

What’s happening on X is part of something much larger. We crossed a threshold in 2025 when AI-generated content surpassed human-written content online for the first time. That’s not a trend. That’s a phase change.

The internet as we’ve known it — where seeing something meant it probably happened, where a photo was evidence, where video was proof — that internet is gone. The new information environment requires new infrastructure: watermarks, detection systems, disclosure frameworks, regulatory mandates, and yes, friction-based interventions that ask you to pause before you amplify.

X’s pre-share AI alert is one small piece of that infrastructure. It’s imperfect. It’s early. The detection technology it relies on has real limitations. But the principle it embodies — that platforms have a responsibility to help users understand what they’re sharing — is the right one.

The platforms that build this infrastructure well will earn trust. The ones that don’t will lose it. And the creators and brands that understand this shift early will have an enormous advantage over those who don’t.

The question was never whether this was coming. The question was when. And the answer, based on what’s happening inside X’s codebase right now, is: soon.

This article is based on reporting originally surfaced by X Daily News regarding code analysis of X's back-end infrastructure, with additional context from Wired's coverage of AI-generated conflict content, the University of Washington's research on Community Notes effectiveness, Hootsuite's 2025 Social Trends data, and the European Commission's published Code of Practice on AI content labeling.